BigQuery is Google’s database that allows you to quickly query and analyze terabytes of data. As seen in article BigQuery: WITH clause, BigQuery offers many more features than standard SQL. Among these is native support for geographic information system, or more simply GIS, functions and their data types.

In this article, we will look at some of these functions to extract some information about the City of London’s bike sharing service and visualize it using BigQuery Geo Vis. In addition, we will see the use of the STRUCT construct to group semantically related columns. We introduce, then, the problem by presenting the problem we want to address and the data we have.

Dataset

The dataset we will use in this paper will consist of over 24 million bike share trips in central London. The bike sharing service is now popular in many countries around the world and particularly in large urban centers.

Essentially, a person can go to a dock located on a street corner in the city and rent a bike by the hour. To end the rental, the person must bring the bike back to one of the stations, not necessarily the station of departure. Based on the time of use the person is charged an amount. In some cases, thanks to the annual subscription, if the duration of the rental is less than a certain threshold, no cost is charged. This service is oriented to reduce the number of motor vehicles on urban roads, thus reducing harmful emissions. In addition, the use of bicycles is healthy. In recent years, in addition to bike sharing, other similar services have emerged that offer the possibility of renting electric cars or scooters.

Apart from social issues, the service provider (public or private) needs to monitor vehicle use. Data analysis allows, in fact, to define strategies for fleet expansion, vehicle maintenance and/or user assistance.

The dataset we will use is public and consists of two tables, one containing all the trips that the bikes have made, the other the locations of the docking stations in the city of London.

Exercise

We want to extract some statistics relative to cyclists’ trips. Specifically, we want to identify the routes, i.e., the pairs of rental start and end stations, on which cyclists are fastest.

For simplicity and since we do not have GPS data for each ride, we assume that the travel speed is constant throughout the ride. In addition, the route is approximated to a straight line joining the two stations.

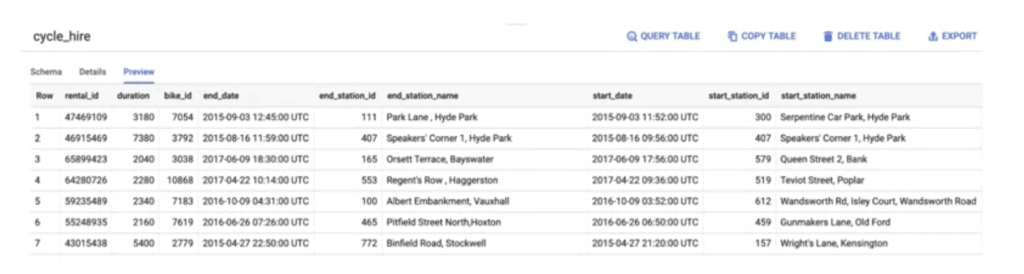

The structure of the table for the individual runs is shown in the figure.

To calculate the average speed, we need the distance traveled and the duration. For the duration we could make a difference between the start and end timestamps. Fortunately there is already a field that provides this information (duration). But if you look at it, the values are very high. What do they represent? To find out, just go to the Schema tab where you will also find the description of each field (when present). In this case it gives us the information that the value saved in the duration field is the travel time expressed in seconds. For this reason it is very useful to add the column descriptions when creating tables!!!

For the calculation of the speed we need only the distance. In this case, however, there is no field that provides this information. The only data available to us are the arrival and departure stations. Therefore, we need to retrieve the geographic position information from the other table. This table contains not only the id and the name of the station, but also latitude and longitude. Since BigQuery natively supports GIS functions, we can use the ST_DISTANCE function, which will provide the distance (expressed in meters) on a straight line between two geographical points. There are other GIS functions as well. These always begin with the prefix ST_.

To construct our query that extracts the routes with a higher average travel speed, we must initially perform a join between the two tables in our dataset. In this way we will associate latitude and longitude information with each station (departure and arrival) of the routes traveled. Since the latitude and longitude columns are not of a geographic data type by nature, we need to convert them to formal geographic points before we can use the ST_DISTANCE function. The ST_GEOGPOINT function is used for the conversion.

Since we will want to draw these lines on a map, we also need to include the ST_MAKELINE function in the result. This way we could import the data into Geo Vis.

This is the first part of the query. We can use the WITH construct, seen in article BigQuery: WITH clause, to save this temporary result and use it to compute aggregation functions and/or filter the data.

To make the result more readable and take advantage of BigQuery’s capabilities, we often use the STRUCT construct. In the example we are analyzing, where the table has few columns, it is not strictly necessary, but in other cases it can be very useful. An example of tables with many columns is the one related to Google Analytics.

Returning to our example, the first part of the query will have to join two instances of the stations table. This is because we need to include the geographic information of both the starting and ending station. Using the STRUCT construct we can group the information of each station by associating a prefix. This way it is clear to distinguish the geographic coordinates of the two stations. This process of merging several tables into a single table is called denormalization. It is often a great way to store data in order to speed up querying by BigQuery without having to join between tables each time. It’s also highly performant because BigQuery stores data in a column format instead of a record format, which means you’re not penalized for having very large schemas.

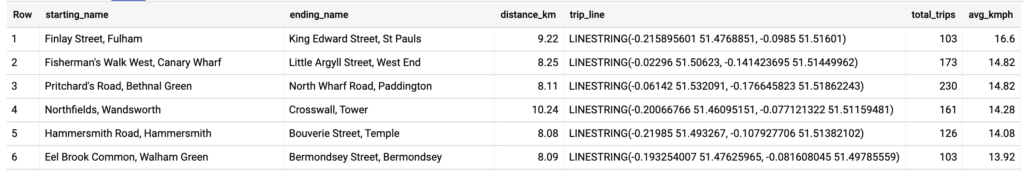

Now that we’ve finished preprocessing all of our data, it’s time to write the query to calculate the statistics we’re interested in. Let’s use the table defined with the WITH clause. Simply enter its name in the FROM clause. Let’s extract, then, the names of the stations, round up the distance of the trip, convert it from meters to kilometers and count all trips between two stations. Since we want to have reliable average speed statistics, we filter only station pairs that have at least 100 trips using the HAVING clause. Finally, we sort the result by average speed and limit the display to the first 100 results.

The result we get is as shown below.

The final query is as follows.

WITH staging AS (

SELECT

STRUCT(

start_stn.name,

ST_GEOGPOINT(start_stn.longitude, start_stn.latitude) AS point,

start_stn.docks_count,

start_stn.install_date

) AS starting,

STRUCT(

end_stn.name,

ST_GEOGPOINT(end_stn.longitude, end_stn.latitude) AS point,

end_stn.docks_count,

end_stn.install_date

) AS ending,

STRUCT(

rental_id,

bike_id,

duration, -- seconds

ST_DISTANCE(

ST_GEOGPOINT(start_stn.longitude, start_stn.latitude),

ST_GEOGPOINT(end_stn.longitude, end_stn.latitude)

) AS distance, -- meters

ST_MAKELINE(

ST_GEOGPOINT(start_stn.longitude, start_stn.latitude),

ST_GEOGPOINT(end_stn.longitude, end_stn.latitude)

) AS trip_line, -- straight line (for GeoViz)

start_date,

end_date

) AS bike

FROM `bigquery-public-data.london_bicycles.cycle_stations` AS start_stn

LEFT JOIN `bigquery-public-data.london_bicycles.cycle_hire` AS b

ON start_stn.id = b.start_station_id

LEFT JOIN `bigquery-public-data.london_bicycles.cycle_stations` AS end_stn

ON end_stn.id = b.end_station_id

)

-- Find the fastest avg biking pace for rides over 30 mins

SELECT

starting.name AS starting_name,

ending.name AS ending_name,

ROUND(bike.distance/1000,2) distance_km,

ST_UNION_AGG(bike.trip_line) AS trip_line,

COUNT(bike.rental_id) AS total_trips,

ROUND(

AVG(

(bike.distance / 1000) -- meters --> km

/ (bike.duration / 60 / 60) -- seconds --> hours

)

,2)

AS avg_kmph

FROM staging

WHERE bike.duration > (30 * 60) -- at least 30 minutes = 1800 seconds

GROUP BY

starting.name,

ending.name,

bike.distance

HAVING total_trips > 100

ORDER BY avg_kmph DESC

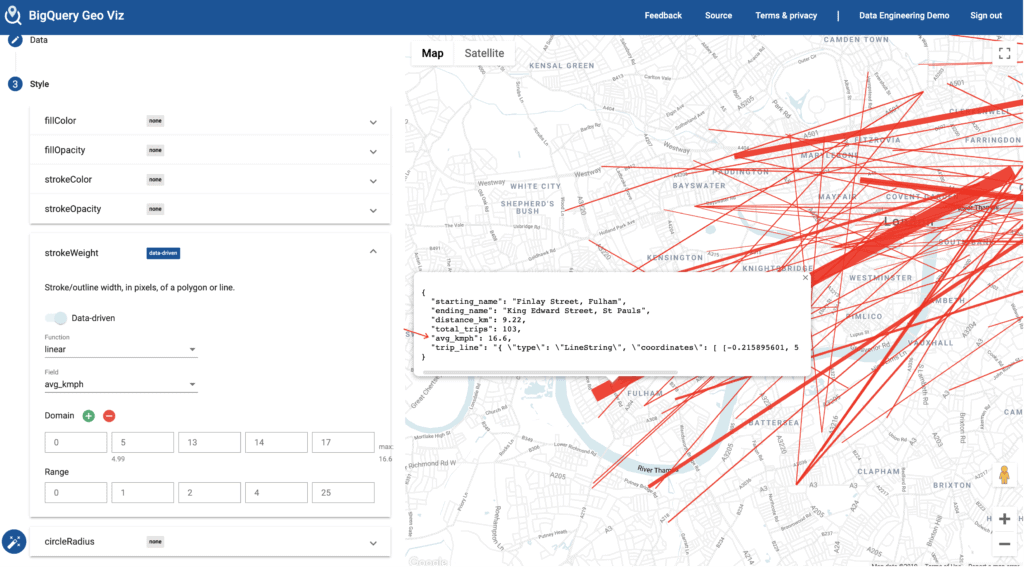

LIMIT 100; To visualize our results on a map we use BigQuery Geo Vis. Geo Vis is a web application that allows you to run queries in BigQuery and visualize geographic data points. You can also change the graphical styles of the represented data and base their representations on the data itself.

Using the previous query and playing with the style settings, we can highlight routes with a higher average speed quickly and intuitively. One possible result is shown here.